For years, artificial intelligence has primarily focused on processing single streams of data. We’ve seen incredible advancements in natural language processing (NLP), enabling machines to understand and generate human-like text. Computer vision has revolutionized image and video analysis. But the real world isn’t siloed into text boxes or image files. We experience it through a rich tapestry of sights, sounds, words, and more. This is where multimodal AI comes into play, marking a significant leap forward in how machines understand and interact with the world around them.

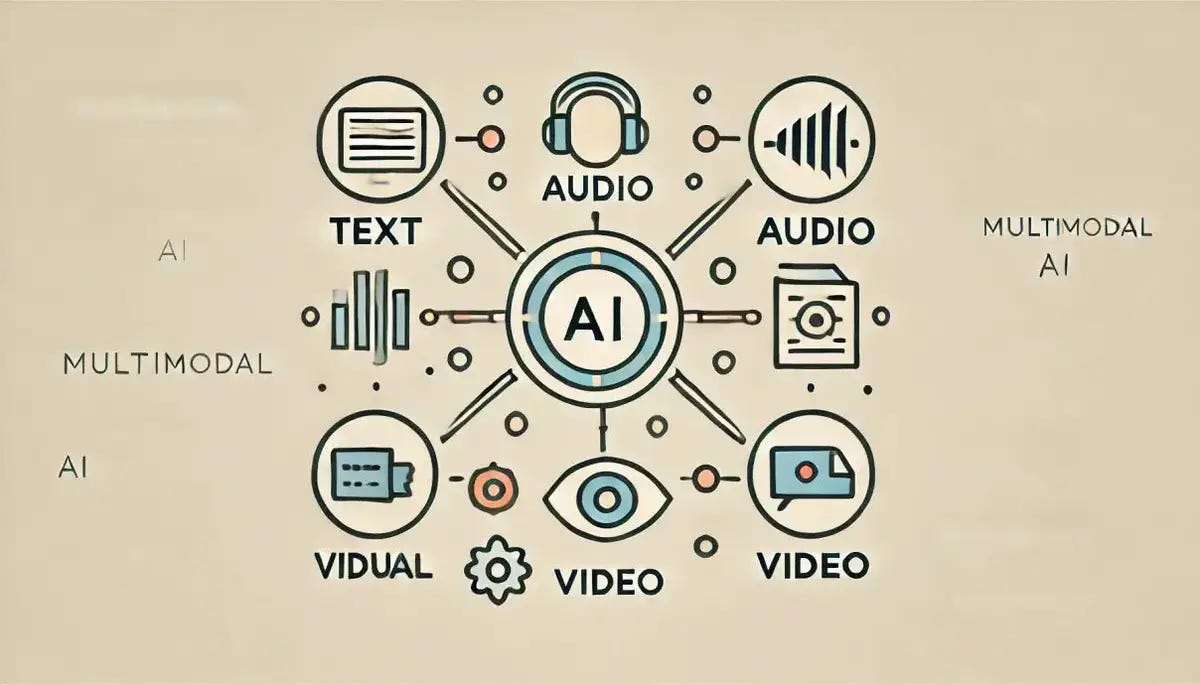

Multimodal AI aims to build systems that can process and understand information from multiple data modalities, such as text, images, audio, video, and sensor data, simultaneously. By integrating these diverse inputs, AI can gain a more comprehensive and nuanced understanding of complex situations. Think about it: a picture is worth a thousand words, but combining that picture with a descriptive caption provides even richer context. Similarly, understanding a video requires processing both the visual frames and the accompanying audio.

This capability opens up a world of possibilities across various industries. Imagine a customer service chatbot that can not only understand your text query but also analyze an image you upload of a damaged product to provide more accurate assistance. Consider healthcare applications where AI can analyze medical images, patient history (text data), and even physiological sensor data to provide more accurate diagnoses and personalized treatment plans.

The development of such sophisticated systems requires specialized expertise. Companies looking to leverage the power of multimodal AI are increasingly seeking out partnerships with experienced firms. In a hub of technological innovation like Chicago, several companies are at the forefront of this exciting field. Whether you are looking for an ai development company in chicago to build a custom multimodal solution, need to hire an ai developer with expertise in integrating different data modalities, options to stay ahead of the curve, Chicago’s growing AI ecosystem offers a wealth of talent and resources.

The demand for ai development services in chicago that encompass multimodal capabilities is rising rapidly. Businesses are recognizing the competitive advantage that can be gained by building AI systems that can truly “see,” “hear,” and “understand” the world in a way that mirrors human cognition. This is driving growth among ai development companies that specialize in this cutting-edge technology.

Key Benefits of Multimodal AI:

- Enhanced Understanding: By processing multiple data types, AI can gain a more holistic and accurate understanding of the world.

- Improved Accuracy: Integrating information from different sources can reduce ambiguity and lead to more reliable predictions and decisions.

- More Human-like Interaction: Multimodal AI can enable more natural and intuitive interactions between humans and machines.

- New Applications: It unlocks a range of new applications that were previously impossible with single-modality AI.

- Better Contextual Awareness: AI systems can better understand the context of a situation by considering various sensory inputs.

Challenges in Multimodal AI Development:

Despite its immense potential, developing multimodal AI systems presents several challenges:

- Data Heterogeneity: Different modalities have vastly different structures and formats, making it challenging to integrate and process them effectively.

- Feature Fusion: Determining the best way to combine features extracted from different modalities is a complex research problem.

- Temporal Alignment: For modalities like video and audio, ensuring proper temporal alignment is crucial for understanding events accurately.

- Computational Complexity: Processing and integrating multiple data streams can be computationally expensive.

- Lack of Large-Scale Multimodal Datasets: Training robust multimodal models requires large, diverse datasets that are often not readily available.

The Future is Multimodal:

Despite these challenges, the field of multimodal AI is rapidly advancing. Researchers are developing innovative techniques for data fusion, representation learning, and cross-modal understanding. As technology continues to evolve and more multimodal data becomes available, we can expect to see even more groundbreaking applications emerge.

For businesses in Chicago and beyond, understanding and embracing multimodal AI is becoming increasingly important. Partnering with the right ai development company in chicago , professionals will be crucial for leveraging the transformative power of this technology and building the intelligent systems of the future. The rise of multimodal AI signifies a pivotal moment in the evolution of artificial intelligence, bringing us closer to creating truly intelligent systems that can understand and interact with the world in a more meaningful and comprehensive way.